The Security PM Gap

Security Product Management Needs Its Own Playbook

Disclaimer: Opinions expressed are solely my own and do not express the views or opinions of my employer or any other entities with which I am affiliated to.

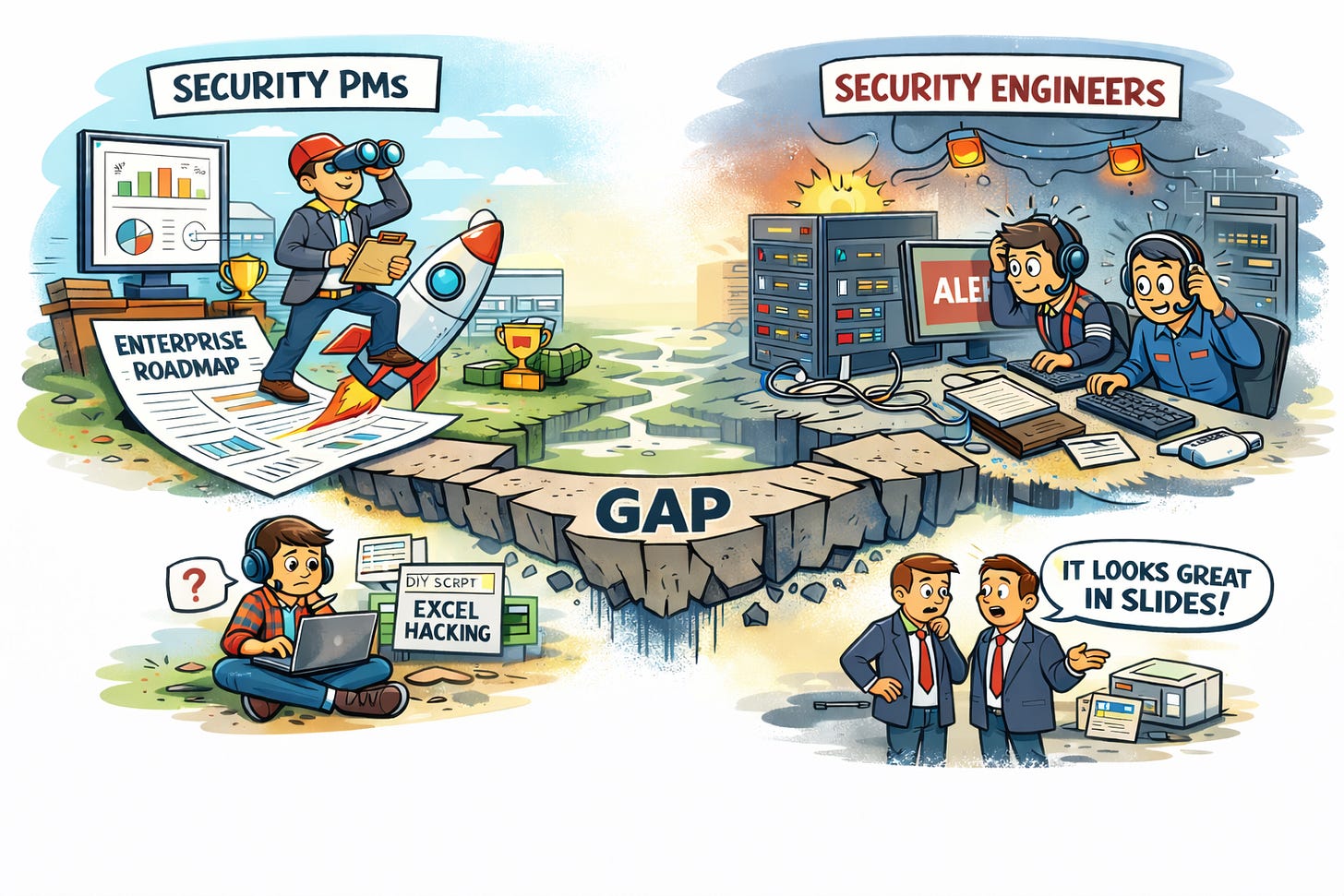

There’s a structural problem in how the security industry builds products, one that rarely gets named, even though it impacts everything from detection quality to incident response to customer trust. It’s the way product management operates inside security companies, and it has less to do with individual PMs and more to do with the fundamental mismatch between traditional product thinking and the reality of defending systems under pressure.

Security product managers are often smart, capable people who have led successful products in healthcare, fintech, productivity, or consumer SaaS. Yet, when these same PMs are placed into a security company, something changes, not because they suddenly become less competent, but because the mental models they rely on no longer map to the environment they’ve entered. Security is not just another vertical. It has its own physics, its own constraints, and its own version of truth.

And when those constraints are misunderstood and not understood completely, the decisions ripple across everything: the roadmap, the customer experience, the trust that defenders place in their tools, and ultimately, whether the product can be relied upon when it matters most

The “Normal” Product Playbook Doesn’t Work Here

Traditional PM training focuses on personas, conversion funnels, engagement patterns, UX flows, and incremental improvement. In most industries, this approach works beautifully. You can A/B test a checkout flow. You can optimize a retention funnel. You can prioritize features based on survey feedback and revenue impact.

But security doesn’t behave like that.

Security tools operate in a world where attackers adapt faster than roadmaps, where “engagement” is irrelevant compared to accuracy, where batch jobs can translate to hours of blind spots. Real-time data determines whether an incident is contained. Search performance directly impacts time-to-triage. False positives erode trust faster than any UX issue. None of this is intuitive unless you’ve lived the rhythms of defending production systems. It’s nearly impossible to understand why a 10-second query delay is catastrophic until you’ve been the person waiting those 10 seconds during a live incident.

So the problem isn’t that PMs are unqualified. The problem is that the industry places them into a domain that requires a completely different intuition, one grounded not in product-market fit, but in threat-model alignment.

The Search That Determines Whether the Product Matters

If there is one element that exposes this mismatch more than anything, it’s the search experience inside security tools.

Security engineers often say something quietly but firmly: If search is slow or inconsistent, we are moving to the next tab.

This isn’t a preference issue. This is a workflow reality.

During breaches, investigations, and triage, everything starts with a query: “Show me all assets using this vulnerable library.” “Which repos imported this dependency?” “Where did this IP interact with our systems?” “Which alerts fired during this time window?” The entire investigation leans on a product manager’s decision about how search should work. They can very well decide to display only 500 results and trim the rest to give you an option of downloading a csv file. And this is where the structural issues appear most clearly.

Imagine a platform that has ingested SBOMs, dependency trees, repository metadata, and package manifests. From a data perspective, it knows exactly which repos are impacted by a new CVE. The information exists. The system has the facts.

But the product manager, operating from a normal SaaS mental model, decides that customer would be notified only after 24 hrs when the scheduled scans would kick in. Maybe this reduces compute cost. Maybe competitors also do it. Maybe early customers didn’t complain.

So the alert for a critical malware vulnerability arrives the next morning, long after engineers manually pieced the picture together themselves. Congratulations, your product has failed to prove its value.

The platform technically worked. But it failed at the only moment that mattered.

This is not because PMs don’t care. It’s because they were never told that timeliness in security isn’t a “nice to have.” It’s a prerequisite for relevance.

Why Incidents Break Traditional Product Assumptions

Security products don’t live in the calm corners of everyday SaaS. They’re expected to operate during chaos, ambiguity, and pressure. During incidents, there’s no space for “personas,” no room for “intentional friction,” no patience for multi-click flows.

PMs who haven’t lived incident response often underestimate how quickly engineers need answers, how every additional click feels like a tax, how dangerous blind spots can become, how misleading delayed data can be, how much trust depends on reliability, not aesthetics. A beautifully designed UI is irrelevant if the user cannot get reliable results when it matters. A feature that demos well may offer no value when the user is actually under attack.

This is where the mismatch becomes existential: Security tools are judged not by how they work on a normal day, but by how they perform on the worst day.

Traditional product instincts are optimized for normal days.

But not all security products face this pressure equally. Incident response platforms, SIEM tools, and threat detection systems live and die by their performance and ability to convey under fire. Meanwhile, compliance platforms, vulnerability management tools, and risk quantification systems operate in a steadier rhythm. Their value comes from consistency, completeness, and auditability over time, not split-second response.

The challenge is that many products try to serve both worlds. A vulnerability scanner might be used for quarterly compliance reports and emergency zero-day triage. A cloud security platform might generate executive dashboards and alert on active breaches. The product must work in both modes, which means the PM must understand both failure modes.

The Business Reality No One Wants to Name

Here’s what the article so far has danced around: PMs often aren’t misunderstanding the domain. They’re responding rationally to the incentives they’ve been given.

They are tasked to grow revenue, which means closing enterprise deals. And enterprise deals often hinge on features that look good in procurement checklists: executive dashboards, compliance reports, SSO integration, custom branding, audit logs.

Meanwhile, the security engineer evaluating the tool cares about query latency, detection accuracy, integration ease, and whether the data is fresh enough to matter. These are not the same buyer. The executive signing the contract may never touch the search bar, let alone notice that its results are constrained to a small fraction of the screen, while the engineer relying on it daily feels that limitation immediately. So the PM, seeing where revenue comes from, builds for the buyer, not the user.

This misalignment isn’t the PM’s fault. It’s the company’s incentive structure. A PM who prioritizes incident response workflows over executive dashboards might be technically correct but also miss quota. The real problem is that security companies often don’t know which kind of product they’re building: a tool for practitioners, or a platform for procurement. And in trying to be both, they end up fully serving neither.

And there’s another layer to this: the investor belief that product managers make the best CEOs, especially in security companies. This isn’t just about hiring philosophy. It shapes who gets funded, who gets promoted, and ultimately who controls the product vision. When boards believe that “good PM = future CEO,” they’re less likely to question whether that PM actually understands the threat landscape they’re building defenses against. The bias toward traditional product thinking gets baked into the company’s DNA from the cap table up.

Are Security Engineers Better PMs?

It’s not saying that security engineers automatically make better product managers. They don’t magically understand pricing, customer segments, or go-to-market strategies just because they know how TLS works.

But they do have one advantage that is difficult to teach: They know what matters when systems are under threat.

Security engineers intuitively understand why real-time signals matter, why correlation logic must be correct, why false positives destroy adoption, why “export to PDF” is never more important than search latency, why data might exist but remain unsurfaced, why UX includes speed, not just layout. This intuition dramatically changes the roadmap. It grounds product decisions in reality, not hypotheticals.

When a roadmap is driven almost entirely by feature requests and customer calls, it’s often a signal that something deeper is broken. Most feature requests in security don’t originate from “new needs.” They emerge from frustration. From waiting on slow queries, from missing context during investigations, from having to work around gaps that force engineers to build their own scripts or spreadsheets just to get answers.

In other words, customers aren’t asking for features because they want more complexity. They’re asking because they’ve already found alternative ways to solve problems the product should have addressed by default. A roadmap shaped primarily by these requests doesn’t indicate strong customer focus. It often reflects unresolved foundational gaps in the product.

The problem is structural. We expect PMs to make security-critical decisions without equipping them with security-critical mental models.

Security Product Management as Its Own Discipline

The solution is not to replace PMs with engineers. The real solution is to treat security product management as its own specialization, one that blends product thinking with threat-informed decision-making.

But what does that actually look like?

Start with onboarding that doesn’t treat security as just another domain to learn through documentation and customer calls. Put PMs in the room during real incidents, not simulations, not tabletop exercises, but actual SOC analyst shifts where they watch someone try to use their product under pressure. Make them sit through false positive analysis sessions where they see how a poorly tuned detection rule creates hours of wasted time. Walk them through how security data flows through detection pipelines so they understand that “eventual consistency” isn’t a database concept. It’s a blind spot. Partner them with an engineer to help them understand how would the tool integrate with existing CI/CD pipelines.

Then embed them with security engineers in a way that actually changes how decisions get made. Not just “talking to customers” once a quarter, but sitting with the people who live in your product during their worst days. A PM who can credibly debate detection logic isn’t collecting feedback. They’re co-designing the system. And when engineers can veto features that compromise core reliability, the roadmap stops being a wishlist and starts being a defensive strategy.

When a PM has to say “let me take it to engineering” to validate a core security decision, it’s often a sign that technical context entered the conversation too late.

But here’s where it gets uncomfortable: the industry also needs to change how it measures PM success. “Shipped features” doesn’t matter if mean-time-to-detection went up. “User engagement” is meaningless if adoption collapses during actual incidents. “Monthly active users” tells you nothing if engineers stop trusting your tool when it matters most. The metrics need to reflect what security products are actually for.

Some companies are starting to figure this out. Datadog hires PMs with SRE backgrounds for their observability products because they learned that someone who’s been paged at 3am understands urgency differently. The “technical PM” role that requires security engineering experience is becoming more common, and not by accident.

And companies need to be honest about who they’re building for. If you’re a compliance platform, own it. Build the best audit trail and reporting system in the industry. If you’re an incident response tool, own that instead. Stop trying to bolt executive dashboards onto practitioner tools and wondering why neither audience is happy. Segment the product lines. Let the pricing reflect which buyer you’re serving. Stop pretending that the person signing the contract and the person using the tool during a breach have the same needs.

Why This Matters Beyond Just Better Products

When security products fail to meet the moment, the consequences ripple outward. Defenders lose trust in their tools. Incidents take longer to contain. Companies get breached not because defenses didn’t exist, but because they didn’t work when it mattered.

And when defenders stop trusting security products, they start building workarounds. Homegrown scripts. Manual correlation spreadsheets. Engineers who abandon the platform and go back to raw logs. Shadow IT isn’t just an endpoint problem. It’s also a security tool problem. Every workaround is a signal that the product failed to earn its place in the workflow.

The industry doesn’t just need better products. It needs products built by people who understand what they’re defending against.